The idea of building an “artificially intelligent” machine is nearly as old as mythology, with the brilliant god-blacksmith Hephaestus constructing various autonomous automatons. The breathtaking Talos tossing stones at wannabe-invaders of the isle of Crete, golden maidens to help him in his daily tasks, or the bird-women singing at Apollo’s shrine in Delphi.

As of 2023, the creation of intelligent machines either to guard, to help in daily tasks, or just to entertain is no longer fiction. According to IDC estimations, global spending on AI-centric systems will reach $118 billion in 2022 and will surpass $300 billion in 2026. This situation arises from various sources.

IBM research shows that one in four companies and business leaders are willing to adopt Artificial Intelligence (AI) due to labor or skill shortages. Also, one in five is doing so due to environmental pressures. The research indicates that the key drivers of AI adoption are the increasing accessibility of Artificial Intelligence Services (43% of respondents say so), the need to reduce costs and automate processes (42%), and the fact that many off-the-shelf business tools have embedded AI solutions already in place.

The business landscape of AI is dynamic, with a vastly challenging environment and dynamic changes almost every day. Yet it is not an impossible task to keep an eye on the trends and overall tendencies shaping the world of tomorrow. Near the end of 2021, the Tooploox AI team selected the top 7 AI trends of 2022.

But first things first, what is an AI trend?

What is an AI or ML trend?

A “trend” is much broader than a single event or technology – it is rather a bundle that combines several concepts sharing a common thread or idea. The shared thread is the trend, followed by examples and technologies to be implemented.

Considering that, we have chosen the trends below:

- Generative AI

- Trustworthy AI and AI ethics

- AI and society

- Workforce augmentation

- Step-by-step improvements of existing techniques

Enjoy the read!

Trends in AI for 2023

Considering the complexity of modern AI and ML, spotting the artificial intelligence trends and machine learning trends that are wide enough to be considered real trends can be challenging. Basically, everything appears to be important. To save you the struggle we have gone ahead and done the job and collected them below.

AI Trend 1: Generative AI

Generative AI refers to the models and techniques that enable neural networks to generate data, be they images, sounds, signals or text-based outcome, you name it.. This year has witnessed a popularization of publicly available and approachable generative networks basically without any technical knowledge required. Dall-e 2 and ChatGPT are the best examples.

Natural language processing models

Natural language processing (NLP) is a field of computer science, artificial intelligence, and computational linguistics concerned with the interactions between computers and human (natural) languages. Natural language processing models are algorithms or systems that are designed to process and analyze large amounts of natural language data.

These models can be used in a variety of tasks, such as language translation, text summarization, sentiment analysis, and topic modeling. Some popular NLP models include recurrent neural networks (RNNs), convolutional neural networks (CNNs), and transformers. These models are trained on large amounts of text data and are able to extract information and generate human-like text.

And one of the most convincing arguments for the effectiveness of modern NLP models is the fact that the text above was fully generated using ChatGPT. What’s even more interesting, the technology required less than a week to be usable by a million people. The mechanism can find its applications in delivering better customer experience, providing users with more naturally-sounding answers and in supporting the delivery of content.

AI-generated Art (and beyond)

Apart from generating text, AI can also deliver images in various styles and versions. 2022 has seen two major tools go public – Dall-e 2 and Midjourney. When in 2018 the portrait of Edmond de Belamy was sold for $432,500, it was obvious that the painting was far from being the work of a skilled artist.

Modern generative AI models can deliver basically any image – from realistic-looking human faces to unreal images generated from text prompts. Some examples can be found in one of our recent AI news texts.

The examples are so good that AI-generated art has recently won a contest, enraging more traditional artists claiming that the machine-generated outcome is hardly a work of art. Also, who owns the copyright to images created this way – the AI? The AI-delivering company? The author of the prompt?

There is no clear answer on this question, with some people just generating images using prompts, and others (including Tooploox engineer Ivona Tautkute-Rustecka) delivering new forms of visual art using neural networks. And everything in-between.

AI-augmented coding

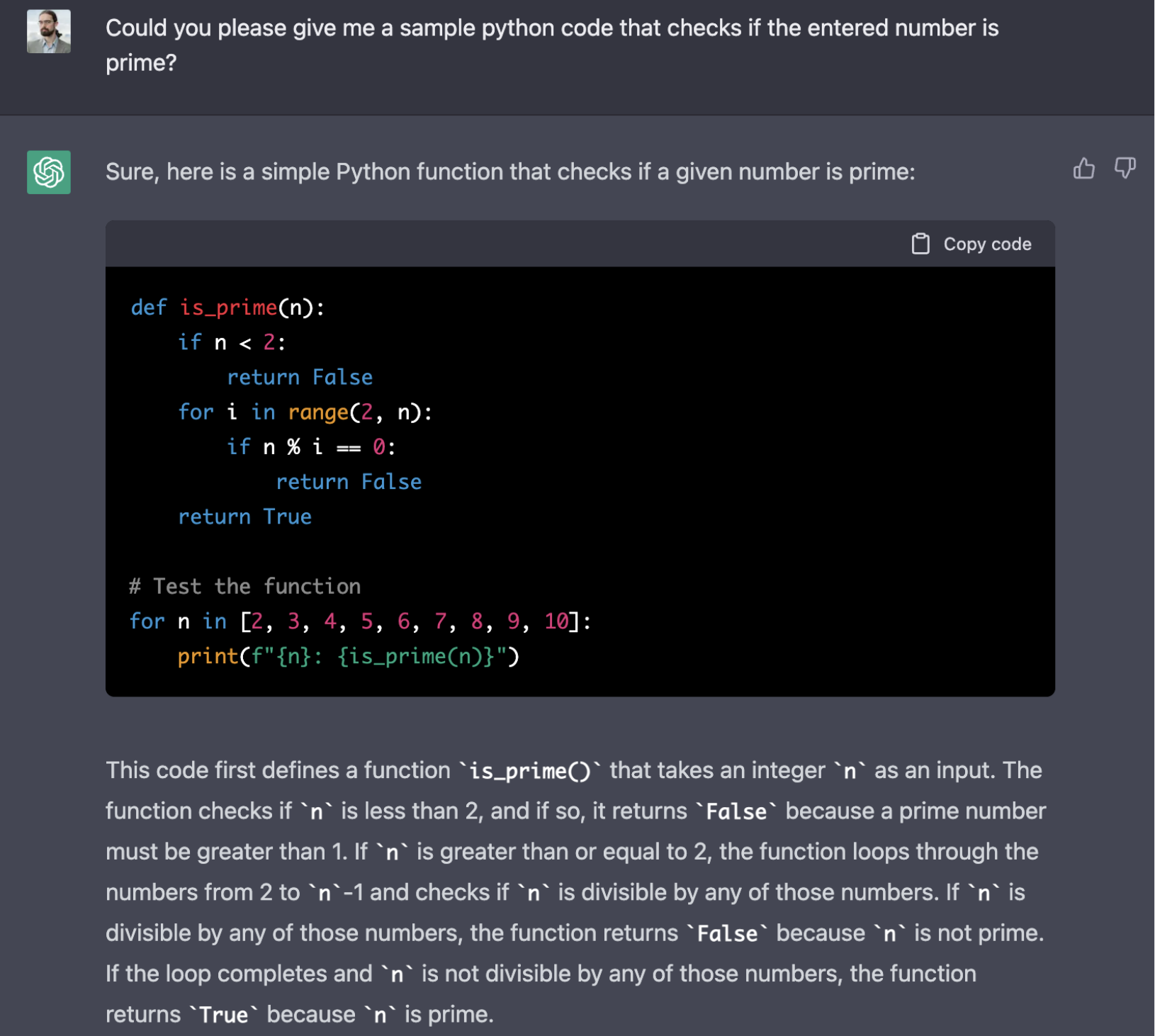

2022 also witnessed the introduction of CoPilot, basically a pair-programmer. The system does a comparable job to a text’s auto-suggestion tool, yet it uses programming languages instead of natural ones.

Interestingly, the ChatGPT mentioned above delivers reliable and correct responses to programming-related questions, including expected code.

The quality of the returned results continues to amaze users. Language modeling, be that in English or Python, will undoubtedly be shaping the future of AI in the year 2023.

AI Trend 2: AI ethics

The increasing number of AI-powered applications exposes more people to AI’s biases, glitches, and malfunctions. As such, there is an increasing need to deliver reliable and secure frameworks for AI solutions to effectively work and do no harm.

Explainability

AI used to be seen as a “black box” where the user enters their input and the output is generated out of a void. Or rather – unexplainable computations done without the touch of a human programmer. If the AI’s job is to find faces in a picture or deliver an image from a prompt – the bias may not be that catastrophic. Yet if the tool has to be used in healthcare or finances, and its recommendations would have a strong influence on people’s lives, problems arise.

Explainability, or the ability to check what factors influence a model’s decisions and outcomes, is one of the key challenges in AI design and control. That’s why Tooploox teams put a strong emphasis on explainability in their models and solutions. For example, a recent blog post about data annotation problems in healthcare with self-supervised learning shows the “eye tracking”-like evaluation method used to control model performance.

Dataset building and management

The root cause of machine learning ethical issues are the biased and imbalanced datasets used to train solutions. Probably the most significant example of AI bias is the Amazon algorithm that automatically rejected female engineers.

No matter how sophisticated an algorithm is, the model will reflect its implicit biases in its behavior. For example – due to an underrepresentation of female voices in datasets, automated captioning models are up to 13% more accurate when working with male voices. When a person speaks with a strong or uncommon accent, accuracy falls even further.

Building a dataset to power a new solution is a challenge in itself, with companies coming up with various solutions – from building them in-house to delivering sophisticated simulators to generate the required dataset or even synthetic data generation. All to deliver more accurate and less biased results.

Another challenge comes from the need for labeling. It can be a high cost to engage a team of experts, for example, to label healthcare data.

AI Regulation

Artificial intelligence is a powerful force that influences society as a whole, yet it is still operating in a gray area in between multiple regulations. To put an end to this situation, the European Commission is currently proceeding with the Artificial Intelligence Act in the EU.

The year 2023 is predicted to be crucial in regulating ML technology-related issues. And the legal controversies around the topic rise – starting from an AI’s right to use artists’ works in their training to hidden biases and discrimination in datasets, later reflected in a system’s performance.

AI Trend 3: AI and society

The increasing popularity and prevalence of AI-based solutions increases the impact of technology on society. These tools can either improve people’s lives and bring new possibilities or wreak havoc in the social sphere using deepfakes or even significantly contribute to global warming via extensive hardware resource utilization.

Sustainable AI and machine learning

To name just the top examples – OpenAI has trained the GPT-3 model using 45 terabytes of data. IBM’s client, The Weather Company, processes 400 terabytes of data daily. Smaller models, for example, the Nvidia-trained MegartonLM, ran 512 V100 GPUs for over nine days, effectively burning 27,648 kilowatt-hours. An average household uses 10,649 kilowatt-hours yearly, making the model nearly as power-hungry as three modern homes.

The need for sustainability and reducing energy consumption is evident. The key challenge is in the fact that existing models were designed to run on a smaller scale, with their relative hunger being much easier to bear. When the model begins to gnaw on terabytes of data, its hunger rises.

Improving efficiency of generative diffusion models

One of the key challenges is to improve the efficiency of the generative models that are responsible for the marvels of AI-powered creation. The models deliver excellent results in generating images, yet take a lot of time (days, sometimes months, even years if counting a single CPU’s usage).

Cutting computation costs and boosting efficiency will be one of the key priorities of AI development. Tooploox also contributes to this trend in our recent NeurIPS paper about Analyzing Generative and Denoising Capabilities of Diffusion-based Deep Generative Models.

Deepfakes and responsible usage

Malicious users of AI technology are also responsible for the creation of deepfakes and propagating fake news. On the other hand, AI-powered solutions can be used to counter these challenges as well. For example, Intel has released the FakeCatcher, an AI-based system that is claimed to be able to achieve up to 96% accuracy when detecting deepfakes.

Also, Hidden Markov Model-based solutions can spot patterns in data, for example, to differentiate a human-written text from one AI-delivered, assuming there is a large enough training pool to spot relevant patterns.

AI Trend 4: Workforce augmentation

Artificial intelligence is a growing way to augment and improve human labor – in ways yet unseen before. Various researches cited in our AI news show that up to 72% of employees are willing to hand mundane and repetitive tasks off to AI-based solutions. On the other hand, the fear of machines overtaking jobs is still strong – up to 14% of employees have already seen their jobs automated.

Hyper Automation

Hyperautomation is an interesting approach that blends the borders of individual business processes and enables companies to automate entire pipelines. Using AI and Robotic Process Automation companies can find savings and improve their effectiveness by handing the dull and repetitive tasks mentioned above over to automated systems.

Edge AI

Another phenomenon included in the workforce augmentation trend is the increasing prevalence of AI in edge devices. Transferring gargantuan amounts of data to remote data centers is sometimes necessary, yet comes with a cost. For example, transferring point cloud and lidar data from autonomous cars and back again would be madness, as it is both costly and inefficient.

Moving AI models to edge devices requires high technical proficiency. The models need to be smaller, less energy-consuming and highly optimized to perform on an edge device. A good example comes from the Light depth estimation camera, where the AI component supports traditional engineering-based techniques in delivering outstanding accuracy in depth estimation.

Use cases and applications of edge AI will only rise in 2023.

AI Trend 5: Step-by-step improvements of existing techniques

Finally, the last but certainly not the least AI trend for 2023 is the crawling yet stable research-heavy improvement of existing techniques. Just to name some examples:

- Human in the loop – this approach to machine learning includes human feedback in the training process. Thus, it is possible to train a model with more sophisticated data and skills. Also, the human component provides automatic evaluation of the desired model.

- Mixture of experts – the mixture of experts can be compared to a situation when there is a group of specialists of various focuses who break a problem into pieces and solve it, with each expert tackling only the part he or she is an expert in. A good example comes from computer vision in autonomous cars, where one network can be used to recognize humans, another to recognize road signs, and another to spot vehicles.

- Out of distribution detection – this approach aims to reduce the workload required in dataset management. Out of distribution detection is the process of automating data validation when building datasets – the system simply spots images that are odd.

For example if someone has mistakenly uploaded his or her summer vacation photos to a dataset consisting of medical images, spotting it manually might be a challenge, especially in datasets consisting of tens of thousands of images. Having an automated system in place to spot the odd image would be much easier. On the other hand, training a spotting system is a challenge in itself.

- Continual learning – this concept in data science basically enables machines to learn again and again without forgetting acquired knowledge. Neural networks often suffer from catastrophic forgetting, defined as an abrupt performance loss on previously learned tasks when acquiring new knowledge.

For instance, if a network previously trained for detecting virus infections is now retrained with data describing a recently discovered strain, the diagnostic precision for all previous strains drops significantly.

To mitigate this, we can retrain the network on a joint dataset from scratch, yet it is often infeasible due to the size of the data required, or impractical when retraining requires more time than it takes to discover another new strain. The field of machine learning that aims to address this problem is called continual learning.

Summary

The trends in artificial intelligence listed above will power machine learning and artificial intelligence development in the upcoming year of 2023. With the intensity of development and the amazing new applications of machine learning as witnessed today, the future is ever more interesting indeed.