After testing many generative AI-based products, I get the feeling that many solutions are hurriedly and cheaply slapped together in trying to keep up with the current fashion. As a result of using these technologies and having extensive talks with prompt engineers, developers, product designers, and most importantly, AI itself, I have discovered recurring risks that are important to consider when developing new products.

Artificial intelligence (AI) is no longer a futuristic concept – it’s here and changing how we interact with technology. In recent months, generative AI models like ChatGPT, Midjourney, and StableDiffusion have grown in popularity. They are now present in various products, from well-planned implementations that aid in content production to more abstract or futuristic ones.

Yet we still do not understand how to develop products employing artificial intelligence effectively, nor do we understand the patterns of user behavior nor how people see interactions with AI.

I’ve decided to share my observations to help designers create products that better utilize generative AI models.

It’s not solving real problems.

Successful products address users’ needs, solve their problems, and offer benefits. As designers, we dedicate significant effort to analyzing, researching, creating personas, and empathizing with users. However, many products fail to consider these principles when implementing generative AI, instead opting to follow the trend.

While implementing these models in their simplest form may be easy, cheap, and require minimal effort, the resulting AI product or feature often lacks long-term value and fails to solve users’ problems. Remembering the importance of user-centered design principles, aka solving user problems. It is crucial to create successful AI products that genuinely benefit users.

What to do?

Don’t worry about FOMO when planning product design for AI solutions. Spend ample time on thorough analysis, and don’t rush the process. Dedicating more time to needs analysis increases the likelihood of creating a product that will genuinely benefit your users. The current opportunities lie in products that set trends and create truly groundbreaking applications for generative AI.

It’s not fun to use

AI empowers us to interact with the digital world in a revolutionary and thrilling way. This opens up numerous opportunities to craft experiences that are not only captivating but also unforgettable. As we develop products, let’s not overlook the minute details that can elevate a good experience into something extraordinary. Let’s infuse it with a touch of “magic.”

What to do?

Remember your target audience and the level of “magic” you can afford. Although not every product needs to be entertaining, each one can still deliver exceptional experiences. Be proactive in identifying small details that can enhance the overall experience, and don’t be afraid to deviate from the script.

I recommend “The Power of Moments” by Chip & Dan Heath for a helpful read on this topic.

Trust deficit

There is a famous thought experiment that poses the problem of determining whether AI can think and feel like humans. Can we truly say that AI thinks and feels when it behaves indistinguishably from a human, or is it still just a set of advanced algorithms?

As humans, we can tell what others think and feel because we can relate to them based on our experiences. However, it is not that simple when talking to a computer designed to imitate us. Therefore, we must first build familiarity to trust it even with the most basic tasks.

People are often resistant to change. When the first cars passed down the streets, many people simply shouted “get a horse!” To help people adapt more easily, some cars were designed to look like horses, complete with fake heads and tails. While this may seem absurd, this approach proved to be ultimately effective. Similarly, to make AI more user-friendly, it may need to be designed to look like what we’ve already become accustomed to.

What to do?

To reduce risk, I recommend exploring the following:

- Use Familiar Patterns: Consider whether the introduction of a new UI is really necessary. For instance, if you’re designing a chatbot, ask yourself if it’s worthwhile to change how messages are composed and sent. Will it add value to the product? It may be better to adhere to the standard chat UI and its patterns to best connect the user experience with something they already know.

- Personality: Typically, these models are very rigid and polite, write complex sentences, and explain all their decisions. This creates an unnatural experience that can make users feel uncomfortable. Pay careful attention to the prompts on which you model the AI’s personality, test it iteratively, and monitor your own feelings during each interaction. Consider your brand’s identity, strategic assumptions, and the context in which the AI is designed to function.

- Avatar/Face: Design a few “faces” for the AI and test them. An avatar is an ideal element that can change the perception of an AI from an algorithm to something more. It can enable us to empathize better and create greater trust. However, be cautious not to confuse the user by designing an experience that is too similar to interacting with a natural person. Striking the right balance is critical.

- Transparency: With the current GPT-4 model, it is now possible for AI to fact-check itself. To ensure transparency, it is crucial to implement fact-checking procedures and provide sources for the information presented. Additionally, it is essential to show the AI’s process and the actions it performs to increase trust and credibility in its results.

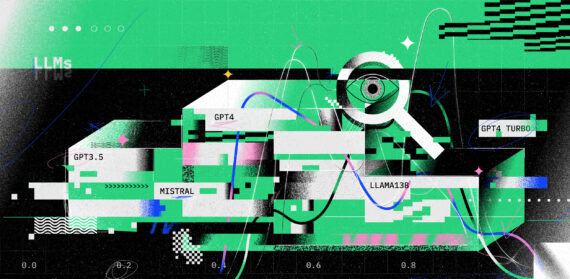

The issue with the data

The open-source nature of generative AI model APIs means that anyone and everyone has equal access to them. As a result, all products based on those models feel and function very similarly. This puts our model on par with those of our competitors, which can have significant business implications. It is, therefore, important to carefully consider your mechanisms for teaching the model to stay ahead.

What to do?

To improve your product, incorporate mechanisms of artificial intelligence learning. These can include simple ratings for the model’s performance, the ability to add comments or the implementation of additional databases from which the model can draw more information. Furthermore, making the forms for training the model as user-friendly and engaging as possible is crucial. After all, the more data you have, your model will improve.

Prompts are not natural.

Interacting with AI requires a certain degree of expertise and nuance. Properly written prompts are crucial to achieving optimal results from AI, but it would be unreasonable to assume that all users possess the skills necessary to create them. To guarantee the best possible experience for our users, we must implement mechanisms for teaching prompts to users in the product itself.

What to do?

To enhance the quality of interactions with AI, it is crucial to consider how to facilitate it. Avoid assuming that users will know how to utilize an AI’s potential to the fullest. Introduce users to the concept of writing prompts and suggest specific actions or prompts at the start or during interactions. Look for practical teaching methods that can be cleverly embedded in the interface.

Hallucinations

AI hallucination is a term used to describe instances where AI algorithms and deep learning neural networks produce results that are not based on real data, do not match any previous patterns, or cannot be explained. This can take various forms, such as creating fake news reports or producing false assertions or documents about historical events or scientific facts. For instance, ChatGPT can produce a detailed account of an event that never happened or write a captivating biography for a fictional character.

As AI technology providers are constantly working to minimize this problem, we need to remain aware of its existence, as it can have a huge impact on our products’ safety and reliability.

What to do?

To mitigate the risk of hallucinations in generative AI models and their potential impact on users, I recommend the following steps:

- Regularly monitor the model’s outputs to identify any that are inconsistent with reality or appear to be out of place.

- Train the model to recognize and flag instances where it may be uncertain or lacks sufficient data to generate accurate outputs.

- Implement user-driven learning mechanisms where users can flag/rate untrue outputs.

- Inform users of the potential risk of AI hallucinations and how it may impact their safety and the reliability of your product.

Rapidly changing landscape

Last but not least, it is essential to recognize that generative AI technology constantly evolves and improves. Today’s Decisions may be outdated tomorrow, as changes occur daily and new technologies and improvements emerge frequently. Keeping track of these developments can be challenging, which is why businesses and designers need to learn how to stay up-to-date, flexible, and adaptable to change. Failure to do so can make a product quickly obsolete as it fails to offer long-term value to users.

What to do?

Stay updated with the latest developments by following companies like OpenAI or Midjourney on Twitter. Sign up for newsletters like Lore.com, read articles, test new products, and build upon what others have discovered. Remember to be flexible in developing your product, anticipate change, and be prepared to pivot and adjust strategies as needed.

Summary

Designing and building AI products and services represents a new set of challenges we, as designers, must face. AI is a cutting-edge tool with immense potential to transform society and business. As designers and creators, we must be aware of the risks it poses and approach AI in product development with mindfulness.

The risks outlined in this article may not directly apply to your product and do not guarantee success in creating AI-based products. However, they serve as a valuable starting point for categorizing risks and taking steps to mitigate potential issues and ensure success.

I strongly encourage all designers and those working in product development to assess their products for these risks and share any findings from further research and testing. I will undoubtedly continue to do so. Let’s work together to discover the best practices for creating good AI-based product design.

And to finish up, I’d like to share the rules I try to follow when designing AI products. To be:

- Useful. AI should always be designed with the user’s needs in mind to provide the most effective solutions.

- Inclusive. AI should strive to create an environment without prejudice or barriers, ensuring everyone is included and valued.

- Innovative. AI should constantly push boundaries and innovate, improving the quality of life for its users.

- Trustworthy. AI should always act with integrity, creating trust with every action and safeguarding users’ privacy and data.

- Ethical. AI should be designed with an understanding of the ethical implications of its use and prioritize the well-being of its users while adhering to ethical standards.

- Ecological. AI should be designed with the best digital ecology practices and act to promote global well-being while minimizing its environmental impact.

- Agile. AI should be designed for quick iterations and changes, ensuring that it can evolve with the needs of its users.

- Transparent. AI should be transparent in its decision-making process and easy to double-check, ensuring users can trust its actions.

- Impartial. AI should not work to profit an individual or organization in an unethical way, remaining impartial and fair to all parties involved.