According to research done by IBM, up to 90% of all medical data consists of images. The revolution started with X-ray imaging in 1885, when Wilhelm Röntgen captured an image of his wife’s hand bearing a ring.

Since then, the use of x-rays and other medical imaging technologies has increased to an unimaginable scale. According to WHO there are an estimated 3.6 billion diagnostic medical examinations like x-ray imaging performed every year. This creates a great opportunity for machine learning to support the diagnostic and treatment processes.

Yet the case is not that simple.

This text covers:

- Traditional supervised workflow – learn about the traditional approach’s strengths and weaknesses

- Challenges in labeling medical data – discover why thousands of images without descriptions are simply not good enough

- Our solution – check out how our team leverages a novel solution to tackle the problem

- Challenges in self-supervised learning – see why the approach needs more research

Traditional supervised learning workflow

Machine learning in healthcare is commonly associated with achieving godlike effects in image recognition. According to Nature, a properly trained AI-based system detects up to 79.2% of cancer cases in radiology images, compared to the 80.7% accuracy of a group of radiologists with over 10 years of experience each. The difference is, in fact, statistically insignificant.

This powerful ability comes from the fact that image recognition techniques are domain-agnostic, which can be counter-intuitive for humans. It is no challenge for a human to see the difference between a red balloon and a red ball. For an AI-system, this is as equally challenging as seeing the difference between a healthy lung or one suffering from a disease. The key is in the availability of annotated images.

The challenge lies in data annotation – when it comes to balls, balloons, cats, vehicles or plants, this is not that challenging. But in medical data, annotation comes as one of the major blockers.

Why labeling medical data is so challenging

As mentioned above, the collection of medical data started near the beginning of the XX century. X-ray imaging found its mass application in the trenches of World War I, with Maria Skłodowska-Curie driving one of many mobile X-ray ambulances that supported French troops with access to the latest diagnostic tech. Moreover, it’s likely all the images gathered so far are annotated with a description delivered by the radiologists performing the examination.

Yet the challenges in data go much deeper:

- Compliance in gathering data – gathering legal, royalty-free and compliant images of dogs, cats or basically countless other beings is usually a no-brainer. Yet when it comes to gathering medical images, challenges arise. The data needs to be as anonymous as possible without losing its core value. It needs to be legally obtained, with all acknowledgements accounted for, and those depicted in the image need to give willful consent. Also, the dataset needs to be as balanced and unbiased as possible, which poses yet another challenge. Last but not least, gathering this type of data requires the use of special equipment, be that an X-ray or MRI, which is far less affordable and accessible compared to a camera.

- Multiple incompatible formats of data used – medical data is processed by medical institutions – and these have little to no motivation to use a single standard of data. With every manufacturer having its own data format, building a consistent and actionable dataset becomes challenging. These formats are rarely encountered and often proprietary. Also, any conversion or format standardization needs to be lossless in order to keep the data as pure as possible for the sake of model performance.

- Storage and accessibility – considering the challenge above, the accessibility and actionability of gathered data shouldn’t be taken for granted. There are solutions like Virtum that make medical imagery data accessible for human specialists and AI models alike, yet this is a novel approach and the majority of medical institutions struggle to store data, as they consider actionability to be less concerning.

- Internal biases – rare diseases are… well… rare. Considering this, the model can underperform with the least represented diseases. What’s more concerning, the model can be internally biased by the underrepresentation of particular social groups. Considering the fact that AI models are capable of reading a patient’s ethnicity from X-ray images and MRI scans, the problem is even more alarming.

- Labeling needs to be done by experts – it is not a challenge to find labelers who can identify a ball, a car, a boat or a cat in an image. But when it comes to labeling x-ray scans for signs of cancer, this needs to be done by a group of trained experts. Their time costs money. Also, there is another issue – is their time better spent on labeling data for AI or with their patients providing treatment?

- Takes time and money – all the challenges listed above make the dataset building process challenging and time consuming. And therefore – expensive.

Considering all the challenges above, gathering a dataset of 10 thousand annotated x-ray images depicting lungs with and without cancer is much easier said than done. That’s where self-supervised learning comes in.

Our solution

The Tooploox team has combined transfer learning with self-supervised learning in order to train a lung disease classification model on data with only few annotated examples. One of the key elements of transfer learning is that the model learns with the from-general-knowledge approach. Capabilities are built using a network previously trained on a general dataset built of non-medical images, including cats, cars or buildings.

This network has some “general capabilities” in image recognition which are fine tuned using annotated datasets, gaining superhuman disease-spotter capabilities during the transfer learning process. The process can be compared to sending a preschooler who can spot a car and a cat to a radiology course.

Transfer learning can be compared to the preschool education of the neural network.

What is self-supervised learning

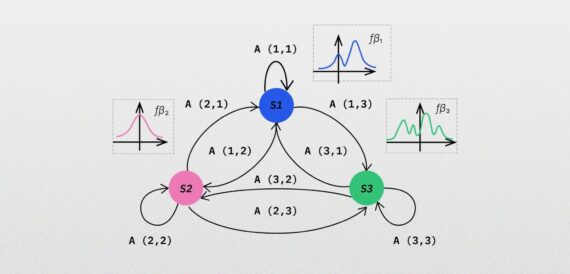

Self-supervised learning can be considered a bridge between unsupervised learning and supervised learning. In the former, the machine explores a dataset and imposes the structure it finds most fitting and the technique is commonly used in large data exploration and pattern-spotting. In supervised learning, the computer learns to map relationships in the data from labels.

In self-supervised learning, a model learns by attempting to predict pseudo-labels which it creates itself from the training data. An example of this may be in predicting the next word in a sentence using the rest of an analyzed text, or to predict the missing part of a cat image (for example the shape of an ear) from analyzed cat images. This way the model constructs basic pseudo-labels without a need for intervention from the data scientist orchestrating the process.

Exploring the dataset

The model is initially trained with unlabelled data – be they images of cats, dogs, vehicles, lung cancer, blood cells or microscope industry quality control – it doesn’t really matter. The key is in delivering a diverse and actionable dataset.

The data scientist can stimulate the model by delivering image transformations. Depending on the domain, images can be altered in multiple ways, including resizing, noise-addition or rotation. This further enriches the dataset and builds the model’s understanding of the domain.

Contrastive learning

In this particular example, the team used contrastive learning – the model was provided with a source image and a set of transformed images, and its task was to spot the differences and patterns connecting the source image and the transformed ones.

The model was trained on on the CheXpert dataset consisting of over 220 thousand X-ray chest images. The team selected only frontal images (roughly 200k images) divided into 10 mutually non-exclusive classes including:

- Pleural Effusion

- Pneumothorax

- Atelectasis

- Pneumonia

- Edema

- Consolidation

- Lung Opacity

- Cardiomegaly

The dataset also included images of healthy lungs. The data was later split into 200k non-labeled examples to perform the initial training and a tuning set of 10k labeled images.

Choosing the transformations

During self-supervised pre-training, the model builds its own understanding of the domain, finding patterns in the data. These patterns vary from completely insignificant (images with repetitive background noise) to vital (patterns that can be later recognized as a sign of a disease, or any health-related red flag).

The model can end up with dozens of patterns it can spot and only a few of them may be useful later. For example, the model spots patterns represented in the lungs of adult people, children, the elderly, women, men, smokers, non-smokers and so on.

In this particular case, the challenge was in choosing the right transformations that would not affect the model’s performance. For example – one of the available methods is to blur a part of an image. Yet the blurred part is also a marker of one of the diseases in question. Also, changing the color would not work, as the images are done in shades of gray and every shade change is significant.

The team has chosen three methods

- Random rotation by up to 10 degrees

- Horizontal flip

- Random perspective

Tuning the model trained previously in the SSL manner

In our particular case, the tuning was done using labeled images of lungs with the diseases listed above.

The key concept was that the model should be able to spot the pattern of ill lungs first, due to the fact that these differ from other types of lungs in a systematic way. The labeled data was used only to name and recognize a particular pattern from others.

In other words – the model leverages the “general knowledge” about lungs it has already gathered.

Results

To validate the effectiveness of this approach, the Tooploox team used ResNet-18 trained on ImageNet as a benchmark. The model was fine-tuned using the same number of annotated images as the SSL models.

The performance metric was the Area Under the Curve (AUC), which basically represents the model’s ability to spot the difference between cases. To make the measurement more reliable and accurate, the models were trained ten times.

In the end, the models delivered by the Tooploox team show significantly higher performance than the baseline model.

According to the team’s research the smaller the labeled dataset, the greater the gain from using the Self-Supervised Learning approach.

The differences vary from 10 percentage points (PP) when using a dataset of 250 labeled images, to 6 pp when using a dataset consisting of 10k labeled images.

Also, the gain varies regarding the type of disease to be identified in an image. The research has shown that regarding efficiency, the greatest gain is seen in the categories with the least amount of annotated data.

Evaluating the model

The explainability and evaluation of the ML model was one of the key components. Models in the healthcare field are expected to make decisions regarding the health of patients, and when the factors that are considered are not fully explainable, the model comes with high risk.

To check the model for hidden biases, the team checked the Pleural Effusion spotting feature. As the tests have shown, the model has correctly recognized the symptoms and associated areas to be checked with the most common areas that are affected.

Yet there were some factors the model counted which affected the overall accuracy – one of the tests has shown that the model checks for ECG electrodes – apparently assuming that one with electrodes is probably ill.

Challenges to overcome in self-supervised learning

The results look promising and applying this approach can support AI adoption in fields where labeling data remains challenging. Yet it shouldn’t be seen as a remedy for all issues that labeling data faces today.

The key challenges in using this approach are:

- Data transformations need to fit the project – the transformations usually include adding noise, slightly changing the color or blurring. Yet such transformations can poison the dataset instead of augmenting it if applied incorrectly.

- More tech work – there are multiple things that need to be handled manually in self-supervised learning. The process requires much greater skill and computing power than the traditional supervised learning approach which is a well established process, polished by thousands of trial-and-error sessions.

- The approach still needs human supervision in the end – last but not least, as with every AI-based solution, the self-supervised learning project requires a great deal of human supervision.

Summary

Self Supervised Learning shows the capability of significantly improving X-ray classification performance. The gain is greater with a reduced number of labeled images in the dataset.

Also, the tests show that the model has no “common sense” when it comes to finding patterns in data and the noise or irrelevant details (like an electrode) can be seen as a prediction factor.

Lessons learned

- SSL can improve X-ray classification performance.

- This improvement is especially significant with very few labeled examples.

- MoCo is a very good model, particularly well-suited for single-GPU training (small batches).

- Explainability is a must-have in medical AI applications.

The research was conducted by Michał Oleszak, Jakub Kubajek, Joanna Kaczmar-Michalska, Piotr Marcol, and Maciej Koczur.