Starting on June 10th, the annual week-long WWDC takes place in Cupertino. The event focuses on news and updates in Apple’s operating systems. It includes keynote presentations showcasing the biggest changes in the newest versions of iOS, macOS, and other platforms, as well as sessions on API changes, laboratories, and Q&A sessions dedicated to developers creating software for Apple devices.

To say that this year’s edition was highly anticipated is an understatement. The 2022 premiere of ChatGPT and the rapid development of AI-based tools have drastically changed the way we interact with technology. Current trends in software development focus heavily on integrating AI solutions into existing tools in every possible way. These include image-generating tools that illustrate our surroundings, more accurate search engines providing personalized answers, and assistance in creating textual content. With unlimited possibilities, the mobile industry is racing to adopt these new approaches, revolutionizing how we interact with our devices.

Worth noting is the trend of developing on-device models that can utilize AI without relying on server components. Nowadays, most successful AI solutions require internet access to perform basic operations, which is costly and time-consuming. However, with increasingly powerful device components, it is now possible to delegate at least some of these tasks to local hardware on the device itself. This approach can improve efficiency, enhance privacy and reduce dependency on external servers.

Last month’s Google I/O conference, which introduced the on-device Gemini Nano models as integrated with Android devices, set high expectations for Apple’s response. I can already say that Apple did not disappoint us, the Tooploox iOS developers. Many new changes and features were introduced, sparking our keen interest in how we can leverage the new frameworks and possibilities in the apps we develop. Here are the most intriguing aspects introduced during this year’s conference.

A.I. = Apple Intelligence

First and foremost is the integration of Apple’s on-device artificial intelligence solution: Apple Intelligence. This personal intelligence system for Apple devices combines the power of generative models with personal context to deliver relevant and useful intelligence to the user. Leveraging Apple silicon, it understands and utilizes natural language, creates images, and takes action across apps to simplify and accelerate everyday tasks. To enhance the experience, it is deeply integrated with the operating system in order to utilize the user’s personal context; as drawn from their photo library, mail inbox, browser search history, calendar events, and more.

[https://www.apple.com/newsroom/2024/06/introducing-apple-intelligence-for-iphone-ipad-and-mac/]

As intimidating as this may sound, a key feature of Apple Intelligence is its on-device processing, which provides personal intelligence without collecting user data. Private Cloud Compute sets a new standard for privacy in AI, enabling flexible and scalable computational capacity between on-device processing and larger, server-based models running on dedicated Apple silicon servers.

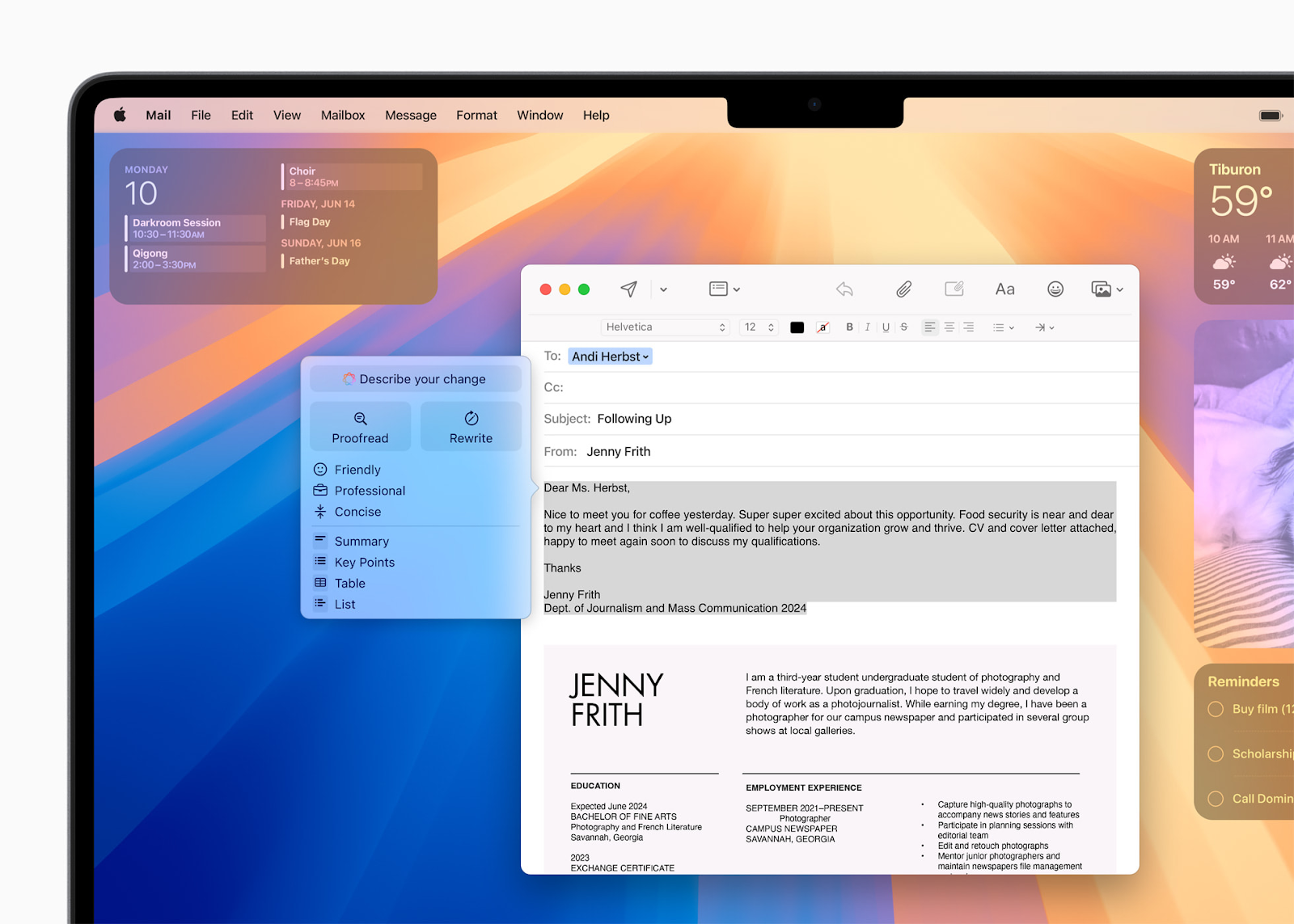

So how can an iPhone user make use of this new addition? One of the features utilizing Apple Intelligence is the newly introduced Writing Tools. Available on every platform, it can help rewrite, proofread, and summarize text in any application. Another example of A.I. use is in simplified interactions with the Photos app, which enables users to search for a picture using natural language descriptions.

iOS 18 will come with many integrated A.I. solutions. As these are utilized across various Swift frameworks and APIs, their possibilities can be further enhanced in newly developed software, offering even more to discover.

Make Siri Great Again

After many years, one of the most popular personal assistants finally receives a long-awaited update. Now powered by Apple Intelligence, Siri is better integrated into the operating system, boasting language-understanding capabilities. The assistant is now more aware of context and capable of understanding a user even if they hesitate or stutter. Additionally, Siri can now provide device support and answer questions about using an iPhone. With onscreen awareness, Siri can interact with and take action based on user content across more apps over time. What’s more, ChatGPT is well integrated with the personal assistant. Every task and question can be seamlessly delegated to the OpenAI chatbot. [https://www.apple.com/newsroom/2024/06/introducing-apple-intelligence-for-iphone-ipad-and-mac/]

Need an image of a cat wearing a sombrero sitting on a couch and eating popcorn? We’ve got you.

Apple has placed a significant emphasis on the generative aspects of AI. Beyond the text generation capabilities introduced in Writing Tools, several image-generating solutions have been introduced.

With the Image Playground, users can create images on their devices by choosing from three styles: Animation, Illustration, or Sketch. This tool is integrated into apps like Messages and is also available as a standalone app. A user can select from a range of concepts, type a description to define a desired image, or choose someone from their photo library to include in the image. The tool comes with the Image Playground API, which allows developers to incorporate this feature into their third-party apps.

Another image-generative feature is the possibility of creating emojis based on a given description or a photo. The generated emoji can then be treated as an inline text or as a sticker in the Messages app.

Houston, we… can control everything

iOS 18 introduces a great redesign to the iPhone’s Control Center. A user can reorganize their controls more freely and group them as they like. With the new API, third-party applications can implement their own controls, providing quick access to their features without opening the app. What’s more, the update allows users to personalize their lock screen with the shortcuts and widgets of both native and third-party apps.

[https://developer.apple.com/videos/play/wwdc2024/101]

Copilot, but the Apple way

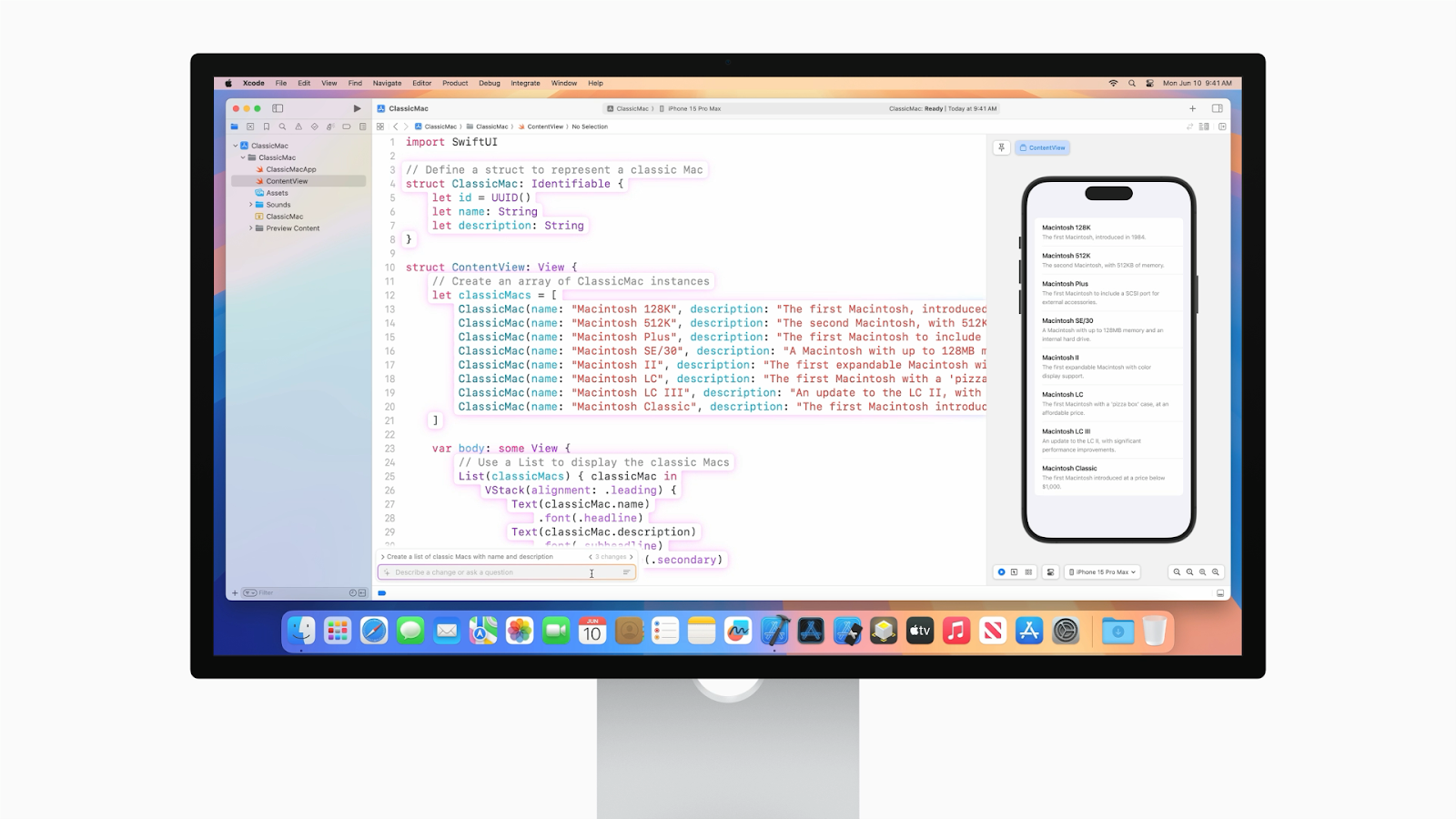

During the conference, not only were updates to operating systems announced, but changes to development tools were also highlighted, including updates upcoming in an Xcode version. The most interesting new feature is undoubtedly Swift Assist, Apple’s interpretation of GitHub’s Copilot.

Swift Assist helps with the implementation of coding tasks, allowing developers to focus on higher-level problems and solutions instead. Integrated into Xcode IDE, it stays current with the latest SDKs and Swift features, allowing it to generate code that seamlessly blends into projects. Swift Assist simplifies tasks like integrating new frameworks and experimenting with different approaches. It uses a model that runs in the cloud and, like other Apple developer services, it is built with privacy and security in mind. The app code is only used to process requests and is never stored on servers, ensuring that Apple does not use it to train machine learning models.

[https://developer.apple.com/videos/play/wwdc2024/10135/]

What’s next?

During the weeklong conference countless topics, new features, enhancements, and frameworks were discussed, leaving many aspects left to discover and test. Currently, beta versions of the new operating systems, such as iOS 18 and macOS Sequoia, are available for developers to download. We are eager to try out the new features and prepare for their official release in September and to learn how to enhance our apps with these new capabilities. We’re especially excited about experimenting with their new AI integration to elevate Tooploox apps to the next level. Stay tuned, as we’ll be happy to share our thoughts on the new features once we’ve had hands-on experience and see how they work in practice.